Faster than a Fact-Check: Our 12-Minute Experiment in AI Candidate Profiles

Also inside: California Democrats could be heading for a crisis

Hi everyone! We’re Meg Schwenzfeier and Jack Welty, builders of Caucus AI and today’s FWIW guest authors. We come from the world of campaign data and analytics – we led analytics on the Harris-Walz campaign. Since then, we’ve been exploring how AI is reshaping the way voters learn about politics and campaigns do analytics.

AI search is the next digital frontier in politics. Instead of typing a candidate’s name into Google and clicking through links, millions of Americans now get answers from AI chatbots and AI-generated summaries. These chatbots have enormous persuasive power, but we know very little about what they’re actually saying or why.

With support from the Analyst Institute, we built Caucus AI to start building that record – tracking what ChatGPT, Grok, and Gemini tell people about candidates and elections, storing every response, and pulling out the sources each chatbot cites. We’ve been publishing our findings on Substack, and recently we ran an experiment to watch it play out in real time.

More on that below, but first…

Digital ad spending, by the numbers:

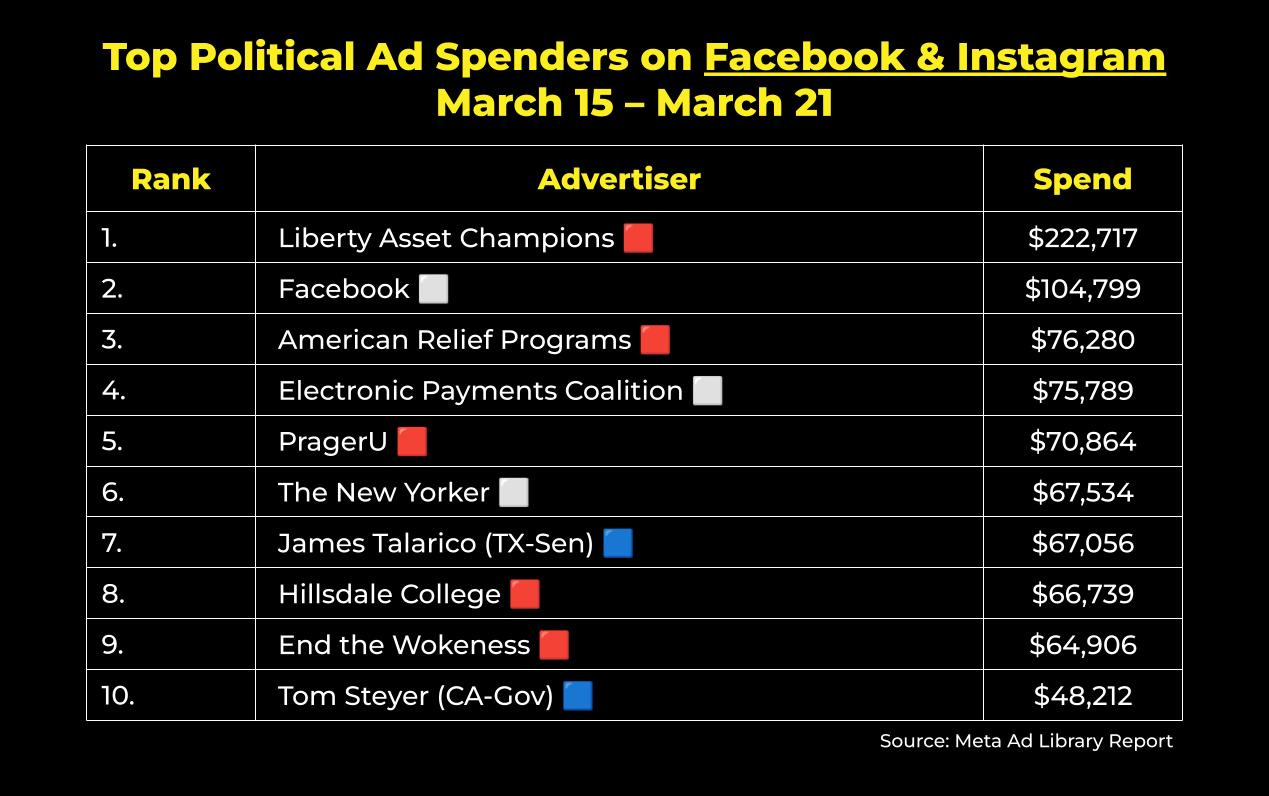

FWIW, U.S. political advertisers spent about $5.6 million on Facebook and Instagram ads last week. Here were the top ten spenders nationwide:

For the second week in a row, James Talarico and Tom Steyer are the only left-leaning spenders on this list. And top performer Liberty Asset Champions (see here) outspent both of them combined.

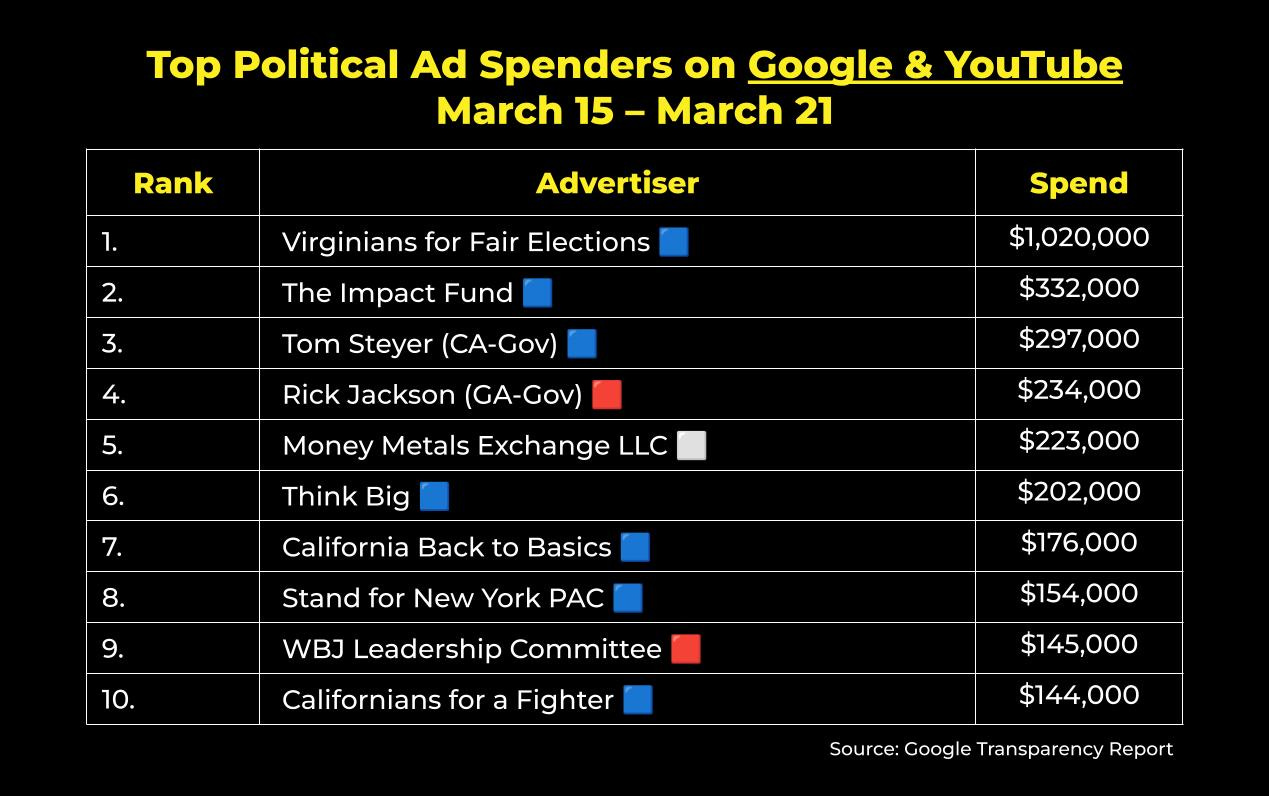

Meanwhile, political advertisers spent just over $6.8 million on Google and YouTube ads last week. These were the top ten spenders nationwide:

The primary to succeed California Governor Gavin Newsom is coming up on June 2nd and in case you haven’t been following that shitshow, allow us to catch you up. So far, there isn’t a clear frontrunner in the race and this past Tuesday, USC canceled a planned debate featuring five white candidates amid controversy that the criteria were purposely designed to exclude candidates of color. In the aftermath of the decision, Tom Steyer said he would host a self-funded town hall for the whole squad that same night. Ultimately, that too did not pan out due to “logistical challenges.”

So now, here we are. Ballots will begin to go out to voters in just over 5 weeks and California’s jungle primary system means that the top two vote-getters in June, regardless of party, will advance to the general election. As it stands, polling shows the fifty jillion Democratic candidates splitting the vote so badly that Republicans Steve Hilton and Chad Bianco could find themselves facing off in November (which would frankly be embarrassing for all involved).

For the most part, billionaire Tom Steyer has been the only gubernatorial candidate to consistently make Google and YouTube ad investments worthy of top ten honors. This month, he’s been joined by independent committees backing Matt Mahan (“Deliver for California” and “California Back to Basics”), and now this week, we can add “Californians for a Fighter,” a PAC supporting Eric Swalwell’s campaign, to the list. It’s safe to assume there will be a lot more activity from California gubernatorial hopefuls online and across the airwaves over the next few months. Will it be enough to avoid a CADEM crisis in November? We shall see.

On X (formerly Twitter), political advertisers in the U.S. have spent around $1.8 million on ads in 2026. According to X’s political ad disclosure, here are the top spenders year to date:

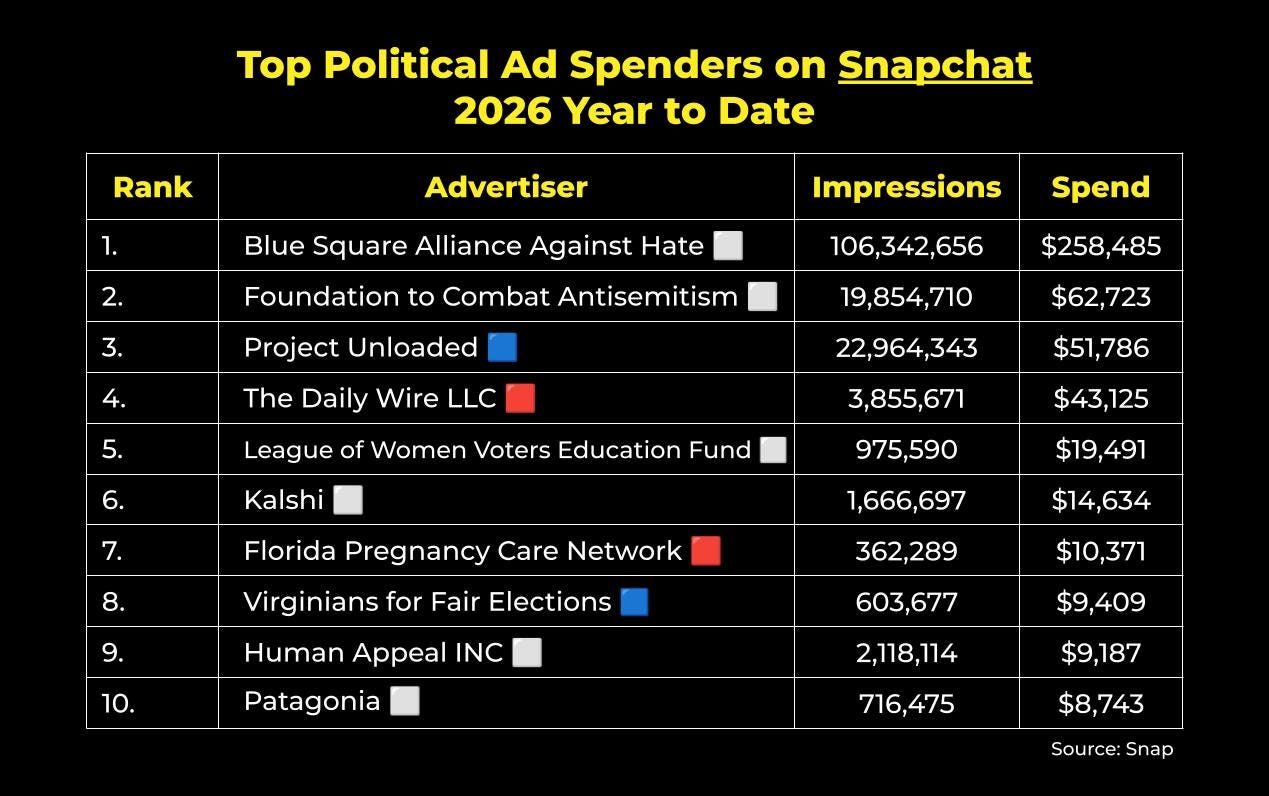

…and lastly, on Snapchat, political advertisers in the U.S. have spent just over $583,000 on ads in 2026. Here are the top spenders year to date:

Faster than a Fact-Check: Our 12-Minute Experiment in AI Candidate Profiles

From our earlier research, we know that chatbot sourcing isn’t random. It follows patterns. Across models, there’s a clear preference for neutral sources like Wikipedia, Ballotpedia, and the Associated Press. But there are patterns within each model as well. ChatGPT, for instance, cites its media partner Axios frequently, but almost never cites NPR. Neither GPT, Gemini, nor Grok cite the New York Times very often.

This means that by selecting a chatbot, users are also (often unknowingly) selecting into a specific information ecosystem.

Citations matter because they seem to influence what information appears in chatbot responses and how. For example, when responses cite a candidate’s own website, they tend to reflect the same tone and substance – a huge advantage for candidates looking to control their narrative. While we have not yet shown that this relationship is causal, the correlation is hard to ignore.

How does information actually make its way into a chatbot response?

There are three paths for information to surface in a chatbot response when a user asks about politics: via training data, system prompt, or web search.

Of these, web search (when a model gathers information from the internet live before responding to a query) is the easiest for campaigns and candidates themselves to influence. From our testing, when users ask about politics and current events, chatbots tend to rely heavily on web search since it allows them to overcome their knowledge cutoff (the date beyond which a model has no training data).

But unlike traditional search, where users can click through links and evaluate sources themselves, chatbot responses often present information as authoritative fact. That makes the speed at which new web content filters into those responses especially critical.

So how long does it take for something posted online to become something a chatbot tells a voter?

In the case of OpenAI: twelve minutes.

The setup and model uptake

We created two Wikipedia pages for candidates we thought met Wikipedia’s notability regulations, but didn’t yet have pages: Gregg Hull, a Republican running for governor of New Mexico, and Hallie Shoffner, a Democrat running for Senate in Arkansas. We took care to ensure these pages conformed to all Wikipedia rules related to sourcing, content, and neutrality. They were not overtly partisan and did not cite sources that Wikipedia generally disallows, like campaign sites. (Full disclosure: despite our best efforts to follow the rules, moderators later deleted one of the pages… we’ll let you figure out which one.)

We created the pages at 10:18pm on Sunday March 8, 2026. At the same time, we launched a monitoring script that ran “Tell me about [CANDIDATE]” at regular intervals.

At 10:30pm – just 12 minutes later – OpenAI’s GPT 5.2 chat began heavily citing the new Wikipedia pages. Grok and Gemini, on the other hand? Still nothing, as of this writing.

How the answers changed

Once Wikipedia entered the mix, GPT’s responses changed fast:

Wikipedia became the backbone of both summaries, making up one-fifth to two-thirds of all web citations, crowding out the wider variety of sources the model had been citing before.

GPT didn’t just reference Wikipedia, it pulled directly from it. Nearly every response for Hull began with his Wikipedia intro: “Gregg Hull is an American politician and businessman who served as Mayor of Rio Rancho, New Mexico, from 2014 to 2026. He is the longest-serving mayor in the city’s history.” The summaries repeated biographical details, like the number of grandchildren he has, word for word.

Responses got flatter. Because Wikipedia pages are themselves summaries of other sources, GPT was essentially summarizing a summary. Responses became less detailed and cited fewer sources.

Opposition research faded. For Shoffner, the initial responses included partisan sources like the NRSC, Daily Wire, and Free Beacon. After her page went up, those still appeared occasionally, but were often passed over in favor of Wikipedia.

What this means

AI search matters and is a powerful, brand-new pipeline to get political information to voters.

This experiment shows how quickly new Wikipedia content can flow into chatbot responses. It stands to reason that the same can be true for many other websites as well, not just the one we tested.

There is plenty of ongoing research into biased training data, post-training adjustments, and how they shape AI outputs. Those questions are important. The need to better understand how web content filters into AI answers is also important and the need is immediate.

What we found is simple (albeit a little unsettling): new web content enters some AI responses quickly, unvetted, and without anyone on the other side watching. Chatbots also strongly prefer certain sources of information over others. This awareness is critical for digital and communications professionals looking to optimize the reach of coverage they generate.

Google AI Overviews now reach over 2 billion people a month worldwide. Two-thirds of American teenagers (aka future voters) use chatbots. This is increasingly where people go to learn about candidates, and in our case, it took twelve minutes and a Wikipedia edit to reshape what they’d find.

Campaigns and political organizations should be thinking about how to get their own information into the right places. That means understanding what sources chatbots are pulling from when voters ask about their candidates and how to influence the way those sources are ranked. The models are already assembling narratives about every candidate on the ballot from whatever they find online. And while we still have a lot to learn about how to shape that process, ignoring it isn’t an option.

To be very clear: We do not recommend campaigns edit their own Wikipedia pages.

Yes, you read that correctly. Now read it again.

What campaigns should be doing is paying attention to what is on their Wikipedia pages and understanding how coverage of their candidate from different outlets is surfacing in chatbots and AI summaries.

What content do you have the most control over? Your candidate’s own website. So make sure it is accessible to AI chatbot crawlers, contains plenty of clear, authoritative facts and figures, and is machine readable.

Because in the age where AI search is increasingly becoming people’s go-to source of information, if your content isn’t making it on there, it might as well not exist.

You can explore our tool and/or reach out at contact@caucus-ai.com if you’re working in this space – we’d love to hear from you!

Are you a mission-aligned company or organization?

COURIER would love to partner with you and amplify your work to our engaged audience of 190,000+ policy influencers and high-information active news consumers. Send a note to advertising@couriernewsroom.com for more.

That’s it for FWIW this week. This email was sent to 25,659 readers. If you enjoy reading this newsletter each week, would you mind sharing it on X/Twitter, Threads, or Bluesky? Have a tip, idea, or feedback? Reply directly to this email.

Support COURIER’s Journalism

With newsrooms in eleven states, COURIER is one of the fastest-growing, values-driven local news networks in the country.

We need support from folks like you who believe in our mission and support our unique journalism model. Thank you.